Evaluation Environment Components

Overview

The BPaaS Evaluation Environment is a companion to the CloudSocket broker which assists him/her towards optimising the BPaaS services offered. This assistance comes in various forms that map to different types of analysis. These types include: (a) KPI evaluation for assessing the performance of the BPaaS; (b) KPI drill-down to find the root causes of a particular KPI violation; (c) best BPaaS deployment discovery to infer the best deployments for a certain BPaaS; (d) process model recreation via process mining. On top of this functionality there exists a Hybrid Business Dashboard which enables the broker to appropriately invoke the analysis functionality as well as to visualise the analysis results by employing the best possible visualisation metaphors. This dashboard is shared between the BPaaS Desing and Evaluation Environments highlighting the close relationship between these environments where the results of one environment (i.e., evaluation) can enable to optimise the main artefacts of the other (i.e., design). This also enables the fast switch from one to the other environment when the need for optimisation is spotted and has to be applied at once. However, we should also highlight that the results of one analysis kind, i.e., the best BPaaS deployment discovery, can be exploited also by the BPaaS Allocation Environment towards the quest to find the most optimal deployment for a certain BPaaS.

Each analysis functionality is offered by a composite component in the form of an engine. In this respect, KPI evaluation and drill-down are offered by the Conceptual Analytics Engine, best BPaaS deployment discovery is offered by the Deployment Discovery Engine, while the capability to execute process mining algorithms is offered by the Process Mining Engine. To support the proper operation of all these engines with respect to the analysis tasks that have to be performed, another engine called Harvesting Engine was developed with the main goal to harvest information from different information sources (i.e., components) in the CloudSocket architecture, to semantically uplift it with the assistance of two ontology (Evaluation and KPI OWL-Q Extension) and to finally store it in the Semantic Knowledge Base. The latter has been realised in the form of an API which has been built on top of an existing triple store.

The BPaaS Evaluation Environment, similarly to the BPaaS Design one, supports multi-tenancy out of the box. This has been mainly realised thanks to the Harvesting Engine which is able to store the information harvested and semantically uplifted in broker-specific fragments (i.e., RDF graphs) inside the Semantic Knowledge Base. This enables the system to service a broker by considering only the fragment that belongs to him/her, thus also allowing all analysis functionalities to focus only on a specific portion of the whole Semantic Knowledge Base content.

As indicated in the analysis of each individual main component of the BPaaS Evaluation Environment, in many cases most of the components can be interchanged with external ones developed by organisations out of the CloudSocket consortium. This is due to the loose coupling between the components, the development of an abstraction API as well as the interchange of standard, as much as possible, formats. Two main exceptions apply to this rule: (a) the Hybrid Business Dashboard component is shared between the two environments which means that if it has to be interchanged, this should also be allowed by the BPaaS Design Environment; (b) the Harvesting Engine does not offer a certain REST API but is executed periodically in the form of a thread. This engine requires high expertise and knowledge of semantic technologies in order to be reproduced as well as a good knowledge of many APIs that are offered by the CloudSocket platform. It also needs to know what is the information offered by such APIs and how it can be correlated in such a way that appropriate semantic relationships between the different resources created can be expressed by considering the current schema of the Evaluation and KPI (OWL-Q Extension) ontologies. In this respect, we consider the development of such a component a difficult but not impossible job to be performed.

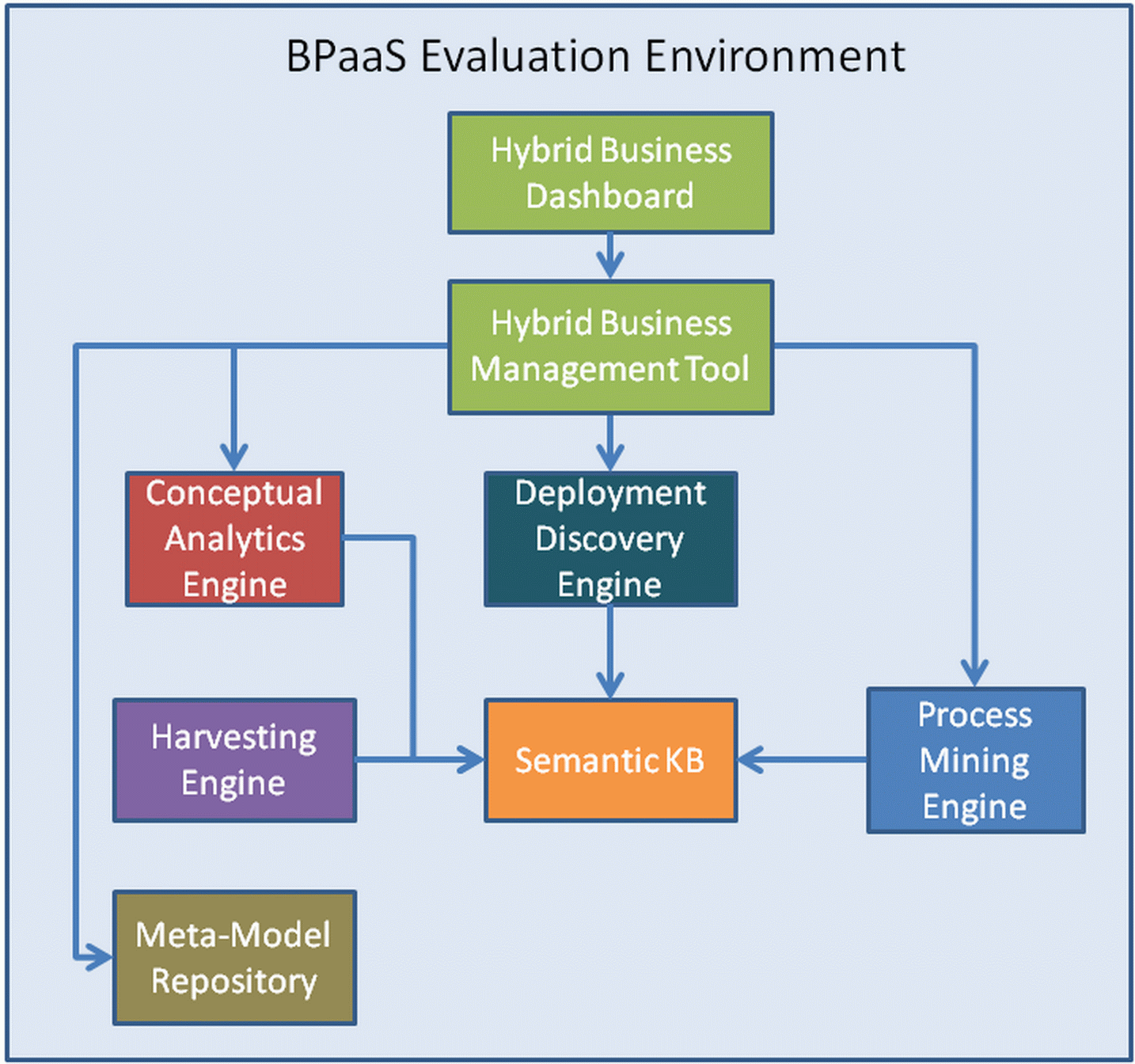

An overview of the architecture of the BPaaS Evaluation Environment is depicted in the figure below. As it can be easily seen, this architecture comprises three main levels: (a) UI; (b) business logic; (c) data. At the UI level resides the Hybrid Business Dashboard responsible for invoking the different analysis components offered. The business logic level comprises all the analysis engines (Conceptual Analytics Engine], [[Deployment Discovery Engine, and Process Mining Engine) as well as the Harvesting Engine. On top of the analysis engines resides the Hybrid Business Management Tool which takes care of the orchestration of the analysis functionality and is the bridge between the UI and the business logic level. Finally, at the data level, the Semantic Knowledge Base exists which enables the querying and the management of the semantic information stored in it.

Architecture of the BPaaS Evaluation Environment. |

More information about this architecture, how the components interact with each other at the same or across different adjacent levels and what are the interactions scenarios covered can be found at the following URL: where corresponding UML diagrams can be viewed. This information was also covered in the D4.5 deliverable.

Below, we provide the links of all the components of the BPaaS Evaluation Environment which provide a more thorough analysis for them catering for different types of audience, including developers and operation managers. An overview of these components is also provided in the D4.6 deliverable.